How Evidence Shaped aprendIA

From Research Findings to Product Decisions — in Practice

Teachers in Borno state during a feedback session, February 2026

What we changed, and why

aprendIA is built for teachers in crisis- and conflict-affected contexts: low bandwidth, high interruption, limited time, very little room for friction. Two evidence streams, a qualitative evidence review and a product analytics review, did not just validate our assumptions, they changed the shape of the product.

Here are the four most consequential shifts:

1. We rebuilt onboarding as product work, not setup

The finding: Teachers who completed more than one exchange on their first day were 34% more likely to return on Day 2. First-session depth, not overall completion, was the strongest and most actionable predictor of retention.

What changed: We stopped treating onboarding as product setup. We redesigned it as the first product experience. The current flow prioritizes a fast, meaningful first exchange over system explanation: teachers choose how they want to use aprendIA today (learn, solve, or access tools) before they've had to understand how it works. The first moment is designed to deliver a small win before asking for any commitment.

2. We tightened the mentor value proposition

The finding: Teachers were not just using aprendIA as an answer engine, which we thought might be a possibility. They were looking for practical guidance, structure, and in many cases, affirmation and emotional support. The mentor framing resonated, but only if it was legible and bounded.

Teachers using aprendIA, February 2026

What changed: We made "mentor" specific rather than aspirational. aprendIA is not a generic assistant, not a linear course, and not an open-ended chatbot. The product now has curated prompts, supervised chatflows, grounded content, and explicit guardrails — support that is practical, respectful, and reliable under real constraint. This led to the creation of a teacher wellbeing module as well as better connecting between immediate support needs and long-term skill-building opportunities.

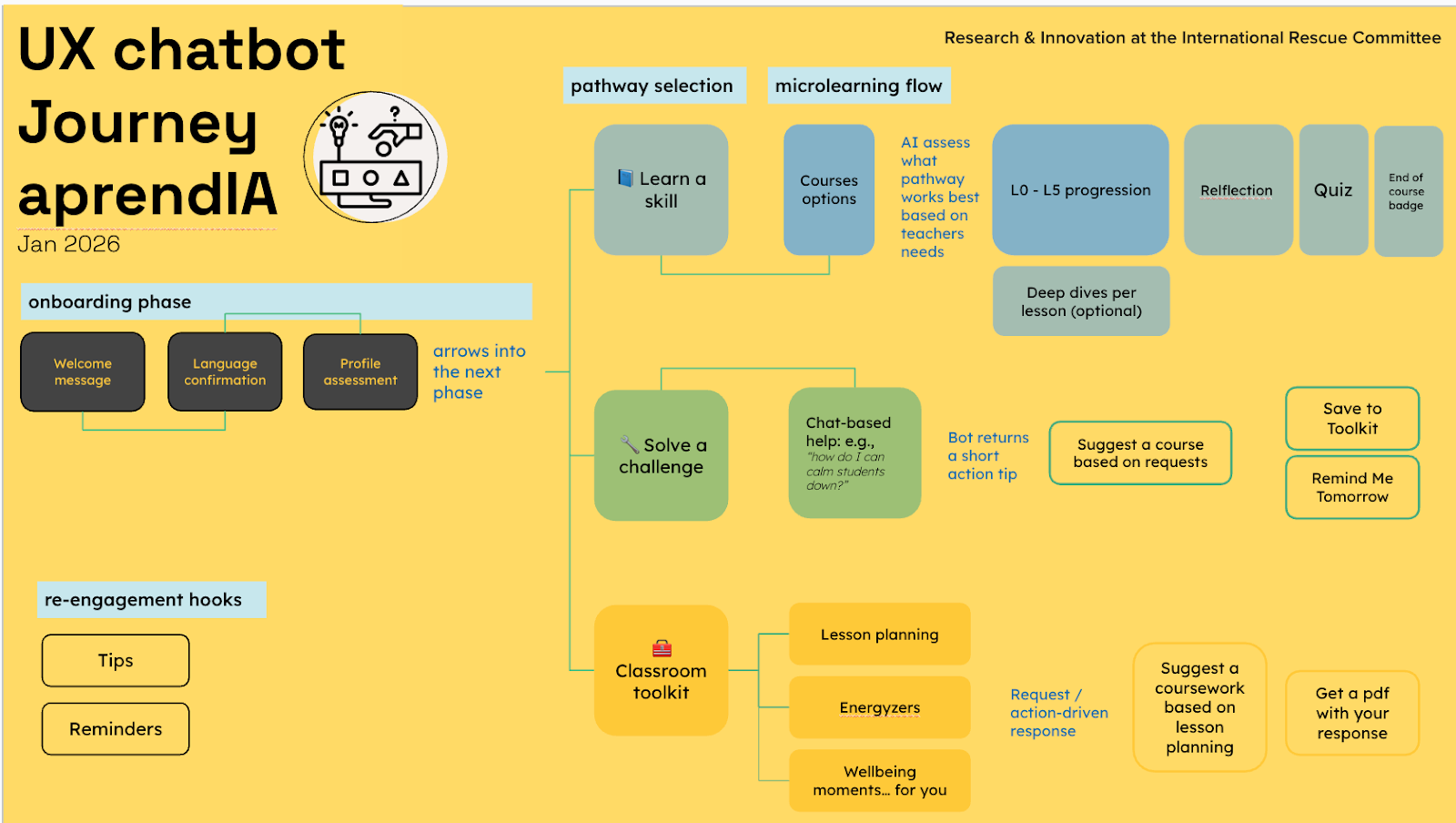

3. We replaced the two-pathway journey with three entry points

The finding: Teachers were not arriving with one shared motivation or urgency. The qualitative review showed a wide, uneven mix: phonics strategies, reading lesson plans, number sense activities, songs, games, adapting to scarce resources. The ask-a-question flow was too open-ended.

What changed: From onboarding, teachers will soon be able to choose among three immediate pathways: Learn a skill, Solve a challenge, or Classroom Toolkit. Sessions are designed for low bandwidth and interruptions — short microlearning, quick help, toolkit use — rather than long uninterrupted sequences. The product logic is simple: aprendIA becomes more intuitive when it does not force all teachers through the same door.

Latest UX Journey Map

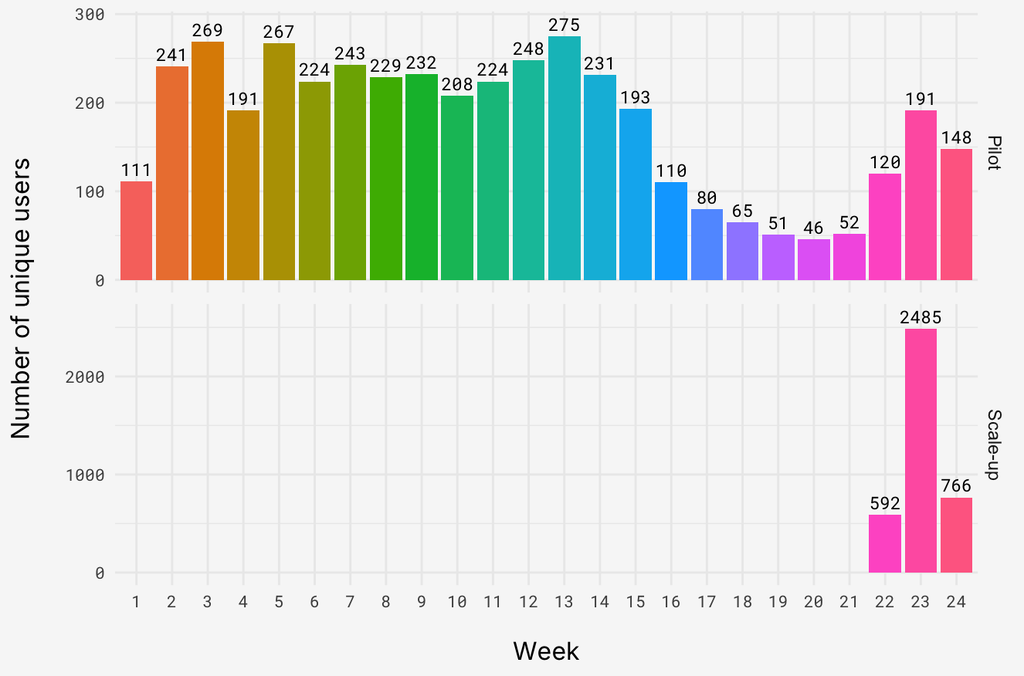

4. We built implementation quality into the product model

The finding: Declining retention pattern in scale-up compared to pilot was initially alarming, and most plausibly an implementation issue, not a pure product issue. The more stable pilot pattern correlated with consistent support infrastructure: orientation workshops, WhatsApp support groups, facilitator follow-up.

What changed: We stopped treating in-bot support and human support as separate tracks. Standardizing trainings for scaling partners and integrating virtual support groups into existing WhatsApp group structures that exist in each school are in process to mediate scale-up challenges. Peer sharing is part of the trust and motivation loop, not an afterthought.

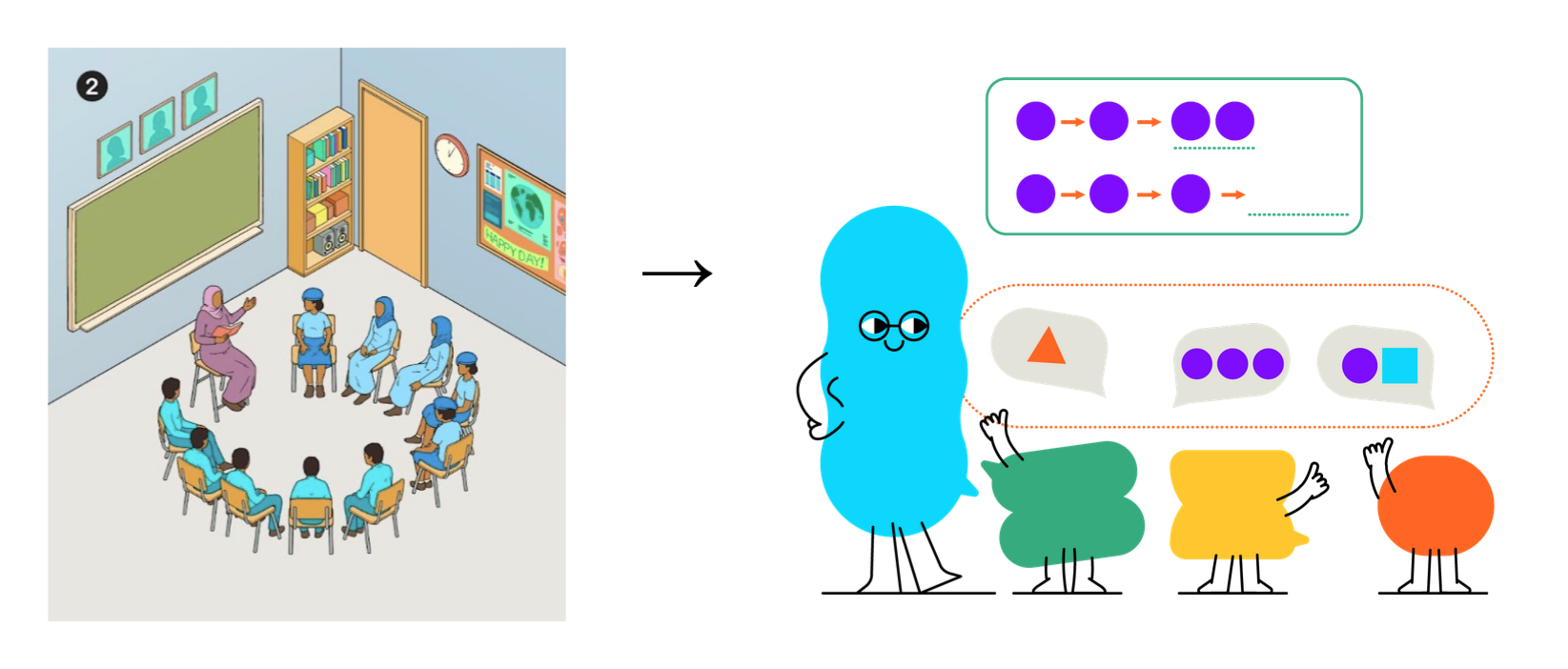

5. Building comprehension through art

The finding: Graphic representation within micro-course work is important to teachers. We originally developed culturally specific graphics in hopes of increasing comprehension and interpretation, based on previous learnings that abstractions (i.e., dots representing children) did not translate well. But this change was expensive, non-transferable between contexts and still created confusion among teachers.

What changed: Graphics that are culturally ambiguous were created. More importantly, they are colorful, fun and give the aprendIA mentor a stronger sense of identity. Feedback from initial interviews on this update have been positive and we will continue tracking bot engagement to assess comprehension.

The two evidence streams that drove these changes

These four product changes did not emerge from intuition. They came from two evidence streams that are most powerful when read together.

The qualitative evidence review

Tools included focus group discussions, key informant interviews, classroom observations, surveys and in-bot conversation analysis. Review of these findings gave us visibility into what teachers were actually trying to do with aprendIA, what kind of support they were seeking, and what expectations they brought to the bot.

Key signals it surfaced:

• Teachers were seeking practical, immediately usable classroom help: literacy and numeracy strategies, lesson planning, classroom management, using available instructional materials.

• Bilingual behavior across English and Hausa was common with usage in both text and audio.

• Some teachers were looking not just for pedagogical guidance, but for affirmation, motivation, and emotional support. This had not been foregrounded strongly enough at the start.

• Confusion and off-topic requests revealed gaps between teacher expectations and product logic.

Limitation worth naming: the qualitative review is exploratory and descriptive. It is strong signal, not impact evidence. It tells us what teachers were trying to do and where friction appeared, not whether aprendIA changed outcomes.

The product analytics review

This review helped us understand how teachers were behaving inside the product itself — particularly around Day 1 engagement, return behavior, and early drop-off.

Key findings:

• Day 1 session count is the most consistent and actionable predictor of return. Each additional first-day session was associated with 34% higher odds of returning on Day 2.

• The more important story is the gap between pilot and scale-up cohorts, not the churn number in isolation. Something changed between those two phases, and it points to implementation rather than the product interface itself.

• Cleaner registration and orientation data are still needed to go further on causality. What we have are strong signals and decision-useful patterns.

Limitation worth naming: the analytics review is clear that we have decision-useful patterns, not conclusive proof on every result that matters most. The roadmap ties product analytics to a wider measurement system — dashboards, event schemas, outcome proxies, and explicit separation of product-level from program-level metrics.

Why these two streams work together

The product analytics review shows where value is being lost. The qualitative evidence review helps explain why — surfacing teacher expectations, practical needs, language realities, and confidence gaps that usage metrics alone cannot reveal.

Neither is sufficient on its own. Both together give us something more useful: a picture of where fit between teacher need and product offer is improving, and where it still is not.

What this means beyond the product

For aprendIA, the work has not been to make the AI do more in the abstract. It has been to reduce mismatch: between what teachers need in the moment and what the product offers, between the support inside the bot and the support around it, and between what AI can generate and what teachers can actually use.

That has made the product more specific, not more expansive. Clearer entry points. Tighter support logic. Stronger reinforcement around use. A more disciplined understanding of where value is actually won or lost.

The broader implication is a product standard that may apply beyond aprendIA: in crisis-affected contexts, implementation quality is part of product quality. Onboarding is product work. Retention is not just a downstream metric — it is a signal about whether the first exchange established real value.

The evidence we have today is strong enough to show where fit is improving and where it is not. Changes forthcoming based on recent findings include:

Additional courses: Teacher wellbeing, Action-based learning, Safeguarding

Additional pathway: Classroom toolkit, including lesson planning, Wellbeing moments for teachers and energizers

Additional features: PDF generation, Text to Voice in Hausa, Tips